|

Getting your Trinity Audio player ready...

|

Introduction

In the world of programming, not all applications are created equal. Some are CPU heavy operations, while some spend a significant amount of time waiting for input/output (IO) operations, leading to performance bottlenecks. In the latter case, concurrency becomes a superhero, swooping in to rescue your application from sluggishness. This blog post explores how concurrency in Ruby can be a game-changer for IO-bound applications, making them faster and more responsive.

Understanding the IO Challenge

IO-bound applications, as the name suggests, spend a substantial portion of their time waiting for input or output operations to complete. This could be reading from or writing to files, making network requests, or interacting with databases. In a traditional, sequential approach, each operation is executed one after the other, leading to idle time where the application is simply waiting.

Threads to the Rescue

Ruby provides a built-in mechanism for concurrency through threads. While it’s important to note that MRI Ruby’s Global Interpreter Lock (GIL) prevents true parallel execution, threads are still a powerful tool for concurrent programming. CPU does context switching from a thread to thread while waiting for IO despite being idle. That gives an advantage even though GIL in-place.

Benefits of Concurrency: Overlapping IO Operations

The real magic happens when concurrency allows IO operations to overlap. While one thread is waiting for a response from a server, another can start its IO operation. This overlapping of tasks significantly reduces the overall wait time, making your application more responsive.

Example Program

Let’s understand this with an example, the problem statement I have is, I need a Ruby script to download a huge file from internet. I have two approaches to prepare this script,

- Sequential download – A traditional approach to download byte by byte.

- Concurrent download – Let’s say, I have to download a file with 1000 MB, I spawn 10 worker threads, each download chunk of 100 MB (Range Request) and parent thread combines these chunks, while CPU waits for download manager to download this chunk, CPU does context switching and spends slice of it’s time in different thread in scheduler queue.

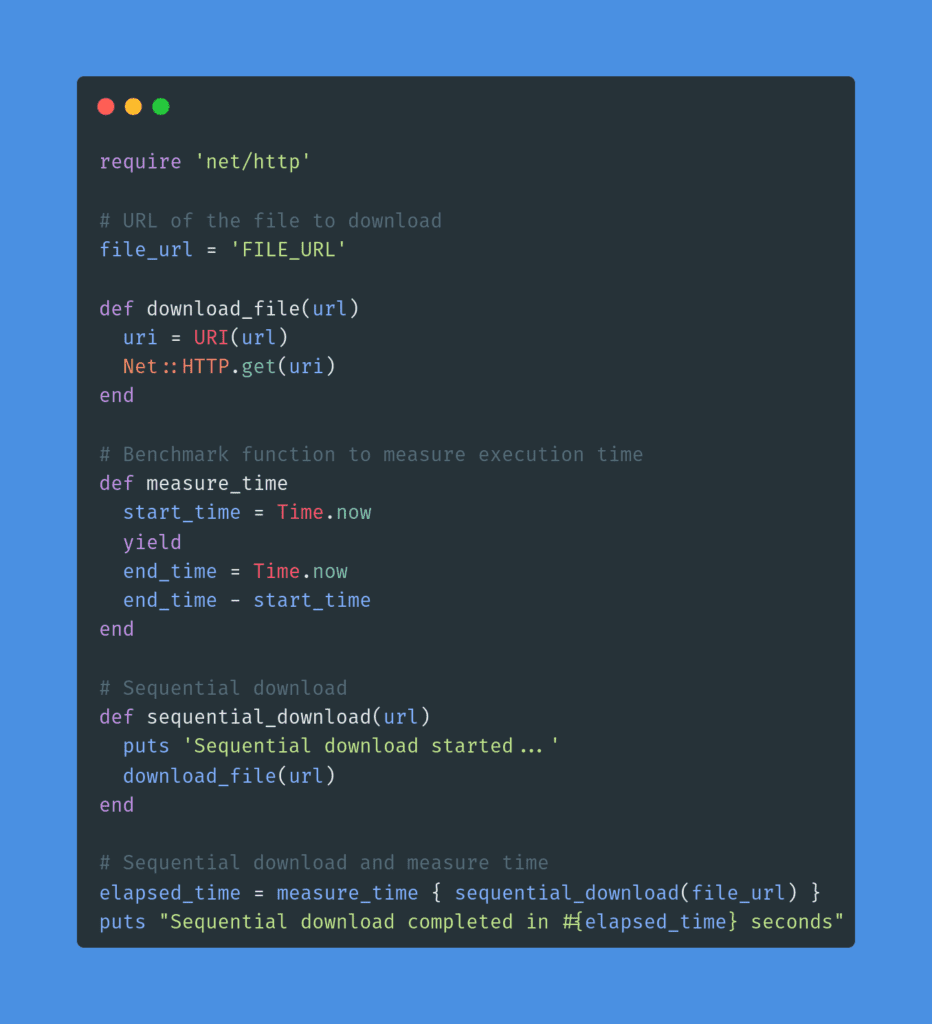

Sequential Download

The benchmark, I observed is, on average, it takes around 21 minutes to download a 800 MB file.

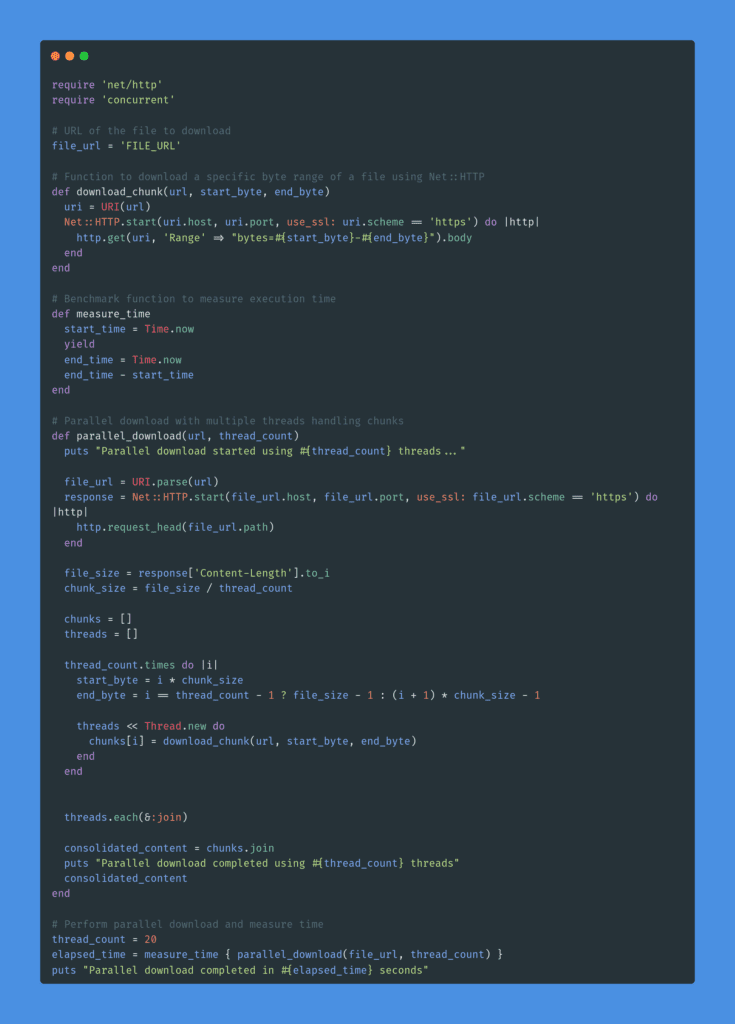

Concurrent Download

The benchmark, I observed is, on average, it takes around 7 minutes to download a 800 MB file by using 20 threads. Below image is for the visualisation on time taken b/w sequential and concurrent download.

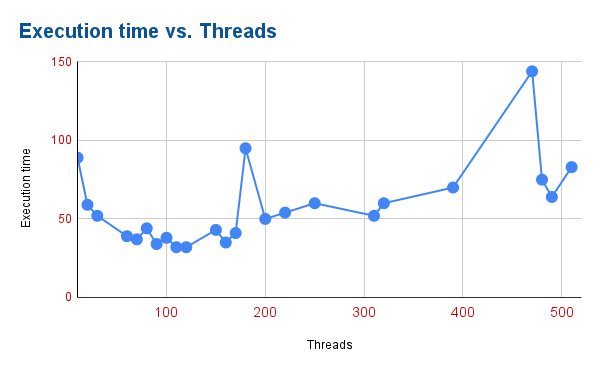

However, note that, adding more threads doesn’t improve performance due to it adds overhead to CPU in managing these threads and in aggregating the results (setting static thread count depends on the use case and underlying machine architecture), here’s the visualisation on performance degradation on adding more threads.

Potential Issues and Considerations

While concurrency is a powerful ally, it’s essential to be aware of potential issues. Thread safety, synchronization, and the GIL in Ruby can impact the effectiveness of concurrency. Proper care must be taken to ensure the safe and efficient execution of concurrent tasks.

Conclusion: Unlocking the Full Potential of Ruby

In conclusion, concurrency in Ruby is a valuable tool for optimizing IO-bound applications. By leveraging threads or fibers, developers can unlock performance improvements and create applications that gracefully handle IO operations, providing a smoother user experience.

As you embark on the journey of optimizing your IO-bound applications, consider the concurrency tools Ruby offers. Experiment, measure performance, and find the concurrency approach that best suits your application’s needs. With the right concurrency strategy, you can transform your sluggish application into a high-performance marvel.

References

https://www.toptal.com/ruby/ruby-concurrency-and-parallelism-a-practical-primer